Research

How to explore learning, remembering and forgetting?

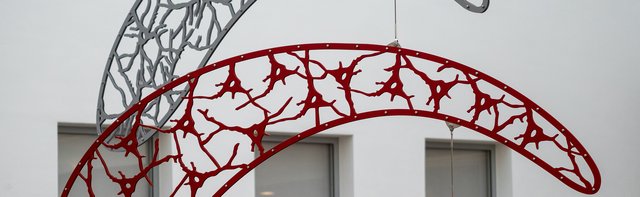

The LIN has a comprehensive interdisciplinary approach to study learning and memory mechanisms at different levels of the brain organization – from molecules to single cells and local circuits to complex networks.

In four research programs we explore learning and memory mechanisms in humans and animals. With multimodal analyzes of molecules, cells and circuits, our research units carry out animal and human experimental research in parallel and comparatively.

Our basic research on learning-relevant brain mechanisms has great application potential, in particular for lifelong learning, the understanding of learning and memory disorders or for technical applications of learning concepts.